It is same as single port CXL Host bridge.ĪCPI0016 and ACPI0017 objects are created using PcieAcpiTableGenerator.Dxe at runtime and passed to kernel. There is no real interleaving address windows across multiple ports with this configuration. In CFMWS structure, Interleave target number is considered 1 for demonstrating a reference solution with CEDT structures in the absence of interleaving capability in current FVP model. The remote CXL memory will be represented to kernel as Memory only NUMA node.Īlso, CEDT structures, CHBS and CFMWS are created and passed to kernel.

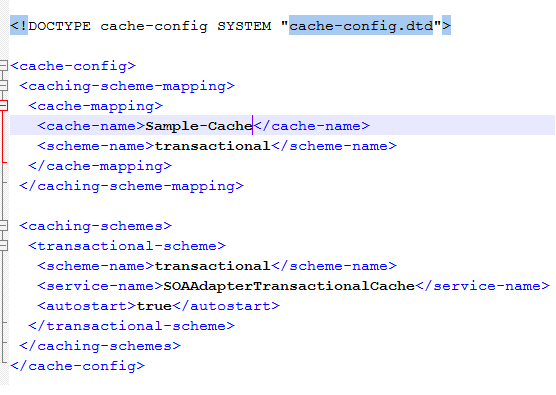

Prepare HMAT table with required proximity, latency info. It would prepare SRAT table with both Local memory, remote CXL memory nodes, along with other necessary details. It will use CXLPlatformProtocol interfaces and get the previously discovered remote CXL memory details. The operation is similar to what is done in SCP firmware, that’s explained above.Īfter enumerating complete PCIe topology, all remote memory node details will be stored in local data structure and CXLPlatformProtocol interface will be installed.ĪCPITableGenerator module dynamically prepares ACPI tables. If DOE operation is supported then send DOE command and get remote memory details in the form of CDAT tables (DSMAS). It first looks for PCIe devices with extended capability and then check whether the device supports DOE.

This discovery process begins based on notification received on installation of gEfiPciEnumerationCompleteProtocolGuid. with target HAID, host memory base address and size for accessing remote CXL memory.Ī new CXL.Dxe is introduced that looks for PCIe device with CXL and DOE capability. HNF_SAM_CCG_SA_NODEID_REG HNF_SAM_HTG_CFG3_MEMREGION HNF_SAM_HTG_CFG2_MEMREGION HNF_SAM_HTG_CFG1_MEMREGION Configured HN-F CCG SA node IDs and CXL.Mem region in HNF-SAM HTG in following order. CXL module would call CMN module API for doing the necessary interconnect configuration.ĬMN module configures HN-F Hashed Target Region(HTG) with the address region reserved for Remote CXL Memory usage, based on the discovered remote device memory size. Software data structure for remote memory will have information regarding CXL Type-3 Device Physical memory address, size and memory attributes. Read the CXL device DPA base, DPA length from DSMAS structures and save the same into internal Remote Memory software Data Structure.Īfter completing the enumeration process pcie_enumeration module would invoke CXL module API to map remote CXL memory region into Host address space and do necessary CMN configuration. DOE operation sequence is implemented following DOE-ECN 12Mar-2020.Ĭheck for CXL object’s DOE busy bit and initiate DOE operation accordingly for fetching CXL CDAT Structures(DSMAS supported at latest FVP model). Once found, execute DOE operations to fetch CDAT structure and understand CXL device memory range supported. pcie_enumeration module invokes CXL module API to determine the same for each of the detected PCIe device.ĬXL module will also determine whether CXL device has DOE capability. Pcie_enumeration module performs PCIe enumeration and as part of the enumeration process it is also checked whether a PCIe device supports CXL Extended Capability. This address space is part of SCG and configured as Normal cacheable memory region.ĬMN-700 is the main interconnect, which will be configured for PCIe enumeration and topology discovery. CXL Software Overview ¶Īt Host address space 8GB address space, starting at, 3FE_0000_0000h is reserved for CXL Memory. CXL Type-3 device supports CXL.io and CXL.mem protocolĪnd acts as a Memory expander to the Host SOC. At present, CXL support has been verified This document explains CXL 2.0 Type-3 device (Memory expander) handling on The above representation shows how CXL Type-3 device is modeled on Neoverse N2 CXL is built on the PCIĮxpress (PCIe) physical and electrical interface with protocols in three keyĪreas: input/output (I/O), memory, and cache coherence. Compute Express Link (CXL) ¶ Overview of CXL test ¶Ĭompute Express Link (CXL) is an open standard interconnection for high-speedĬentral processing unit (CPU)-to-device and CPU-to-memory, designed toĪccelerate next-generation data center performance.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed